Lectures

You can download the lecture slides here.

-

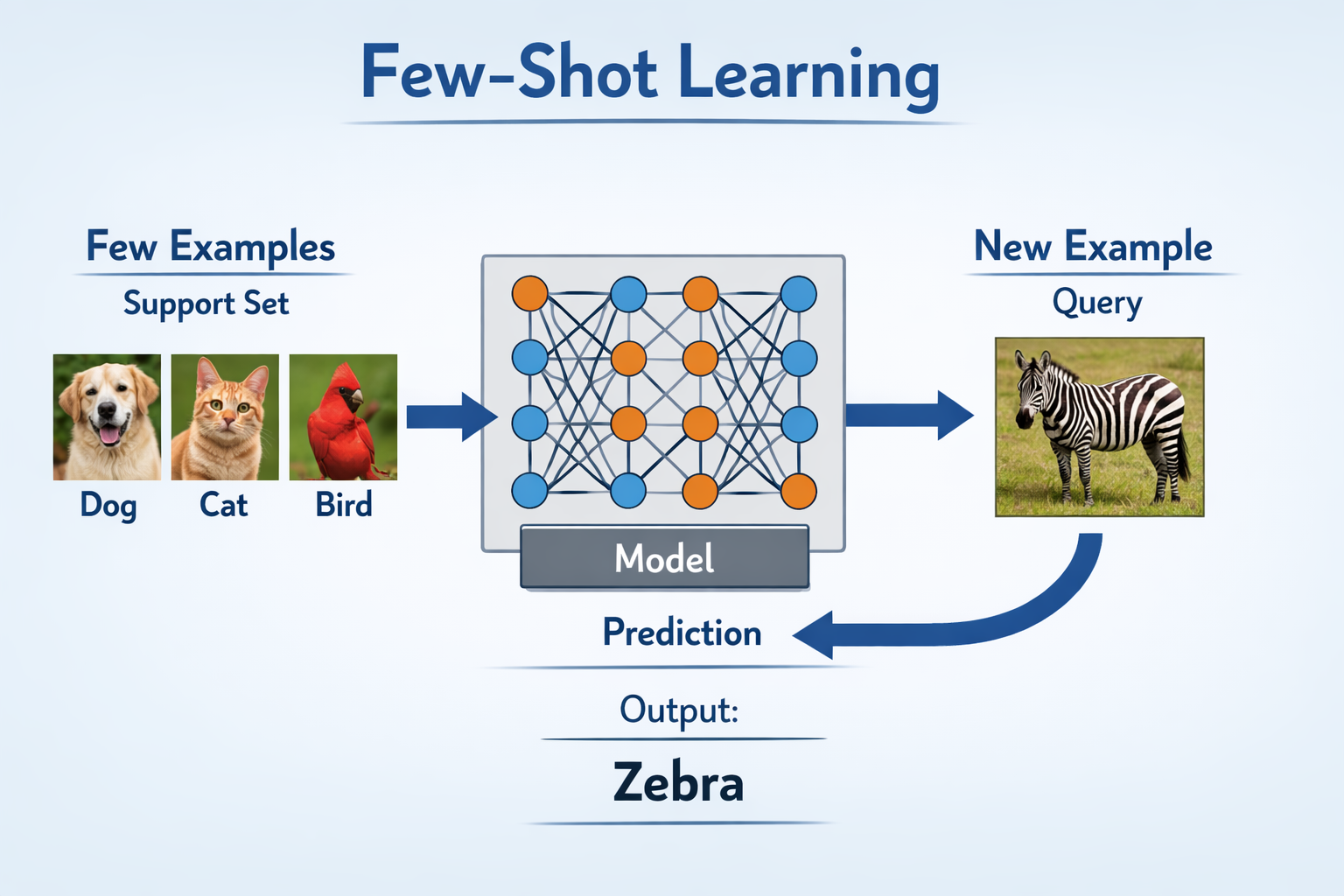

18. Efficient adaptation

18. Efficient adaptation

[slides]

- Brown et al. (2020). Language Models Are Few-Shot Learners

- Hu et al. (2021). LoRA: Low-Rank Adaptation of Large Language Models

-

-

16. Lab 8 Constructed languages

16. Lab 8 Constructed languages

[slides]

- Taguchi & Sproat. (2025). IASC: Interactive Agentic System for ConLangs

-

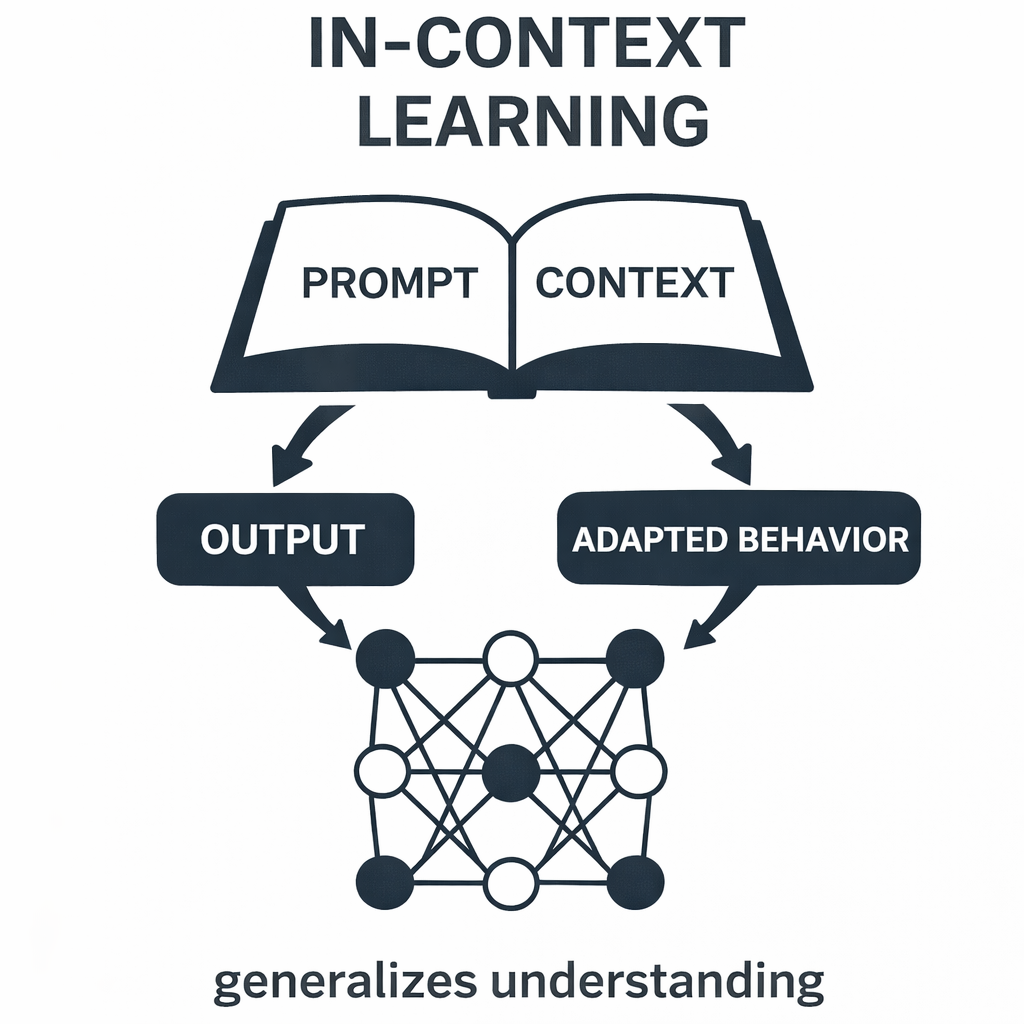

15. Pre/Post-training

15. Pre/Post-training

[slides]

- SLP, Chapter 9

- Chung et al. (2022). Scaling Instruction-Finetuned Language Models

- Wang et al. (2022). How Far Can Camels Go? Exploring the State of Instruction Tuning on Open Resources

-

14. Lab 7 Ollama

14. Lab 7 Ollama

[slides]

- Huang et al. (2018). Music Transformer; Cool demo.

- Smith. (2020). Contextual Word Representations.

-

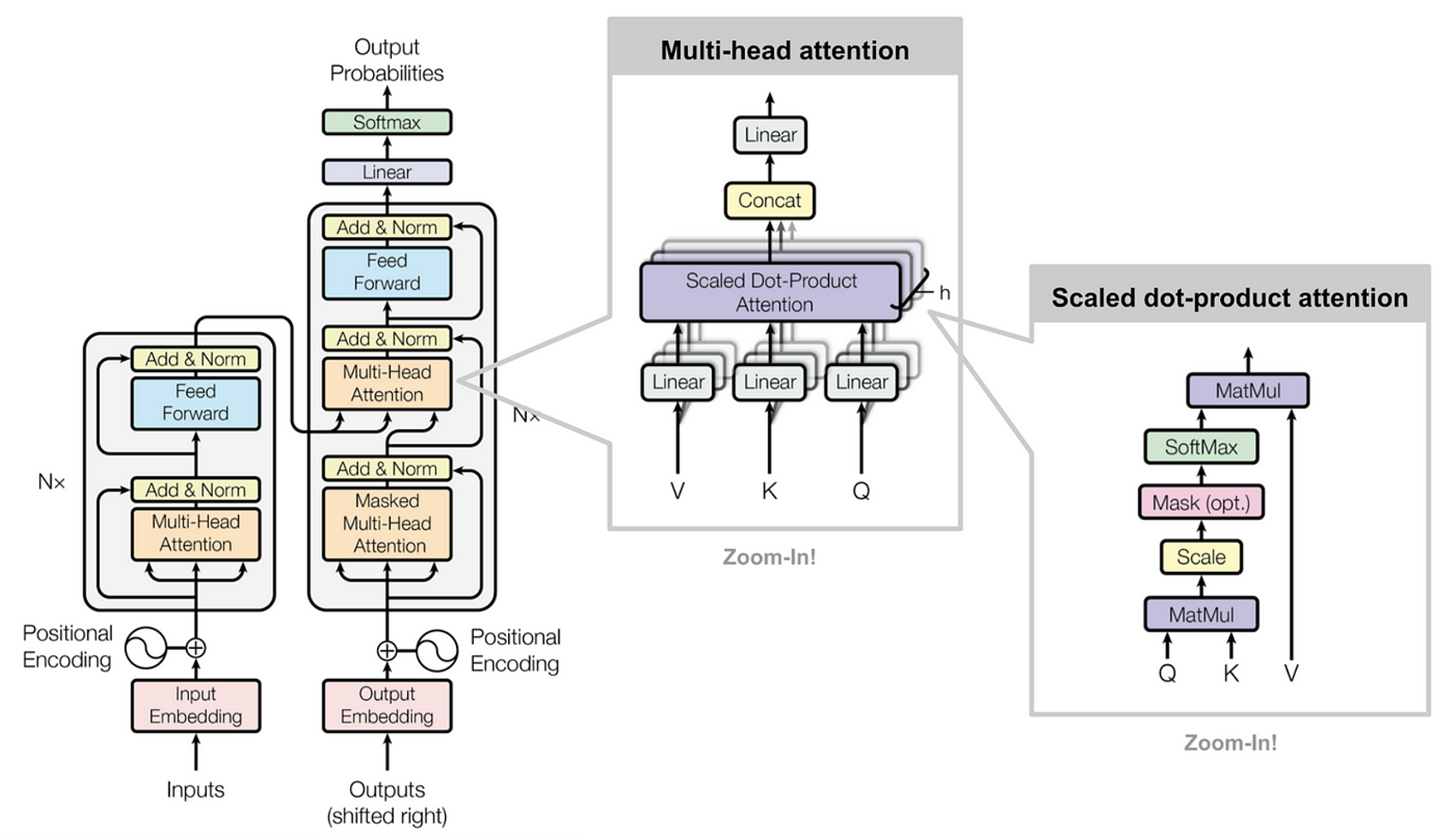

13. Transformers

13. Transformers

[slides]

- SLP, Chapter 8

- Devlin et el. (2019). BERT: Pre-training of Deep Bidirectional Transformers

-

-

-

-

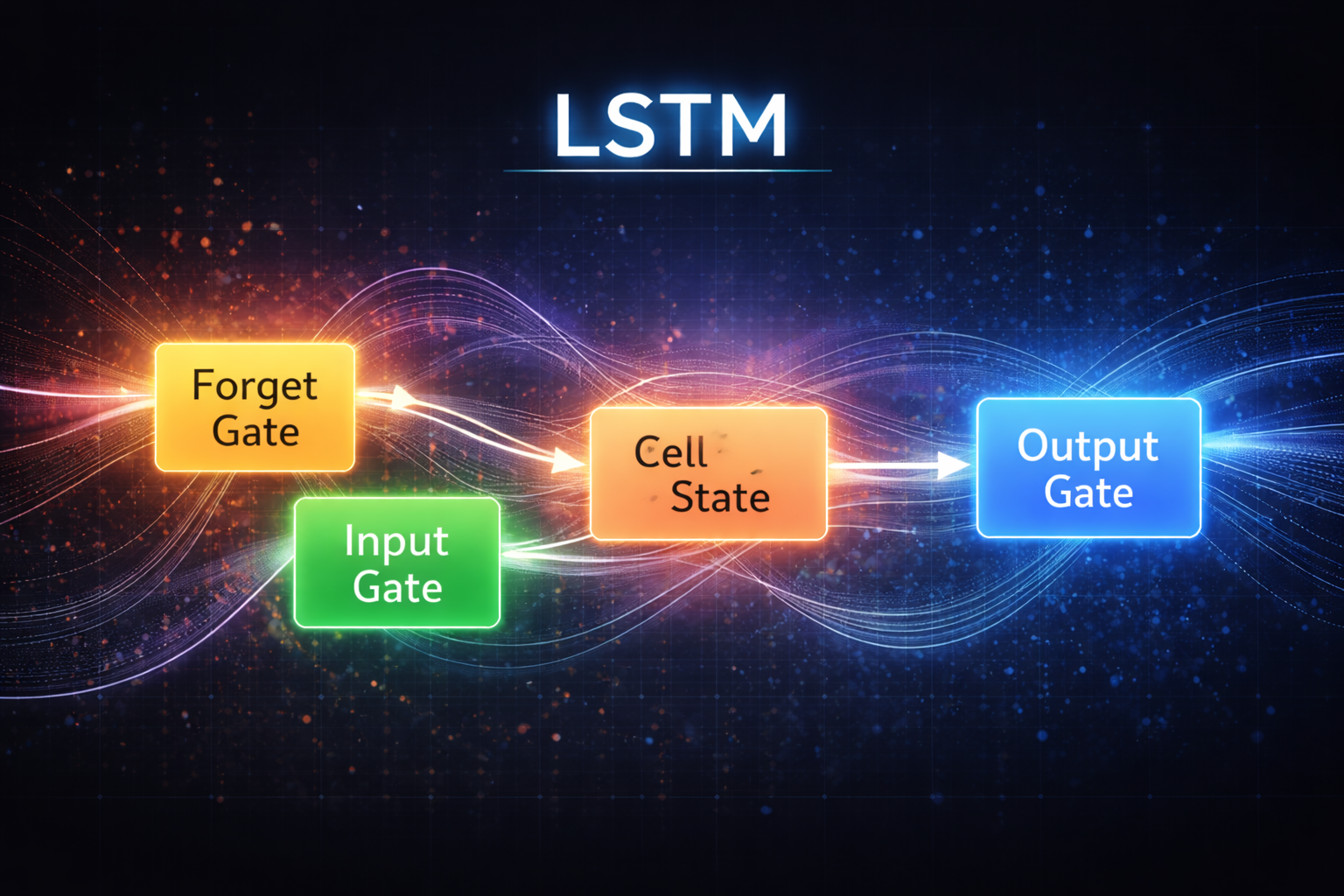

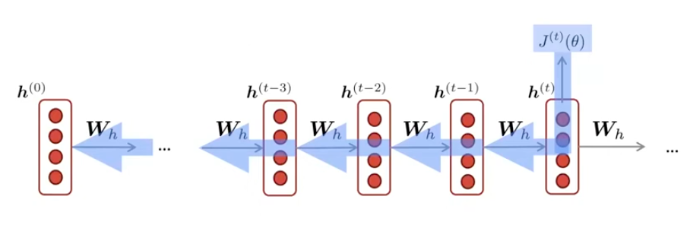

9. Language modeling, RNNs

9. Language modeling, RNNs

[slides]

- SLP, Chapter 3

- SLP, Chapter 13

- Sak et al. (2014). Long Short-Term Memory Recurrent Neural Network Architectures for Large Scale Acoustic Modeling

-

-

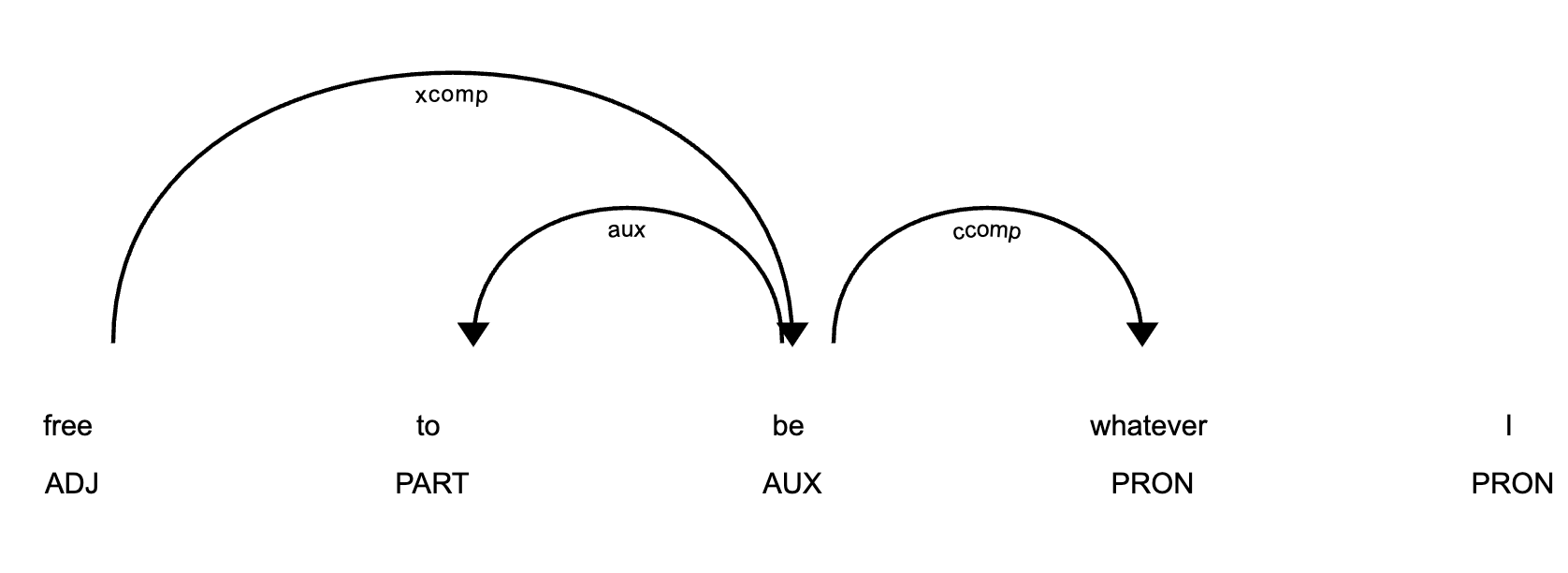

7. Dependency parsing

7. Dependency parsing

[slides]

- SLP, Chapter 19

- de Marneffe et al. (2021). Universal Dependencies

- Chen & Manning (2014). A fast and accurate dependency parser using neural networks

-

5. Neural network

5. Neural network

[slides]

- SLP, Chapter 6

- Pennington, Socher, & Manning (2014). Natural language processing (almost) from scratch

-

4. Lab 2 Word vectors

4. Lab 2 Word vectors

[slides] [colab]

- Levy, Goldberg, & Dagan (2015). Improving Distributional Similarity in Word Embeddings

-

3. Word vectors

3. Word vectors

[slides]

- SLP, Chapter 5, Chapter I

- Mikolov et al. (2013). Efficient Estimation of Word Representations in Vector Space

- Pennington, Socher, & Manning (2014). GloVe: Global Vectors for Word Representation

-

1. Introduction

1. Introduction

[slides]

- Manning, C. D. (2022). Human language understanding & reasoning

- Bommasani, R. et al. (2021). On the opportunities and risks of foundation models

Acknowledgment: These course slides are based on materials from CS224N: NLP with Deep Learning @ Stanford University.